Writing

Adaptive image caching based on network speed with Workbox.js

Progressive Web Apps: the term refers to web applications that carry “mobile” features without being native apps (not written for Android / iOS in their respective languages). The headline feature is offline capability, meaning data for a given web app stays available even when the user has no connection.

Special thanks to Jeff Posnick for his support creating the sample application and reviewing this article.

We’ll walk through building a web application that works offline and caches image assets based on the network speed.

In a previous article we covered how to adaptively load images based on network speed. If you’d like the background on that concept, read that post first.

Get the code and see the app

To see the application, visit https://workbox-cnofarfszr.now.sh/ (best viewed with Google Chrome). Open Chrome DevTools to see Workbox output. You can also take the application offline to test its offline behaviour.

The source code: https://github.com/tpiros/cloudinary-workbox-example.

If you’re running the application locally, launch it on

localhost, not on an IP address or127.0.0.1. Otherwise it won’t function.Service workers require a Secure Context. We either need

httpsorlocalhost. This is determined bywindow.isSecureContext.

The architecture

A relatively simple application with this structure:

- An Express application server that does two things:

- Acts as a REST API server returning data

- Acts as an HTTP server displaying the web app

- A single entry point to the application

- A single JS file to retrieve and display data from the API

The API & HTTP server

The REST API and HTTP server. Nothing exotic here:

const express = require('express');

const app = express();

const news = require('./data').news;

app.get('/api/news', (req, res) => res.status(200).json(news));

app.use(express.static(`${__dirname}/build`));

const server = app.listen(8081, () => {

const host = server.address().address;

const port = server.address().port;

console.log(`App listening at http://${host}:${port}`);

});The news variable is an array of objects containing news items, each with a title, slug, image and added property:

const currentDateTime = new Date();

const news = [

{

title: 'Roma beats Torino',

slug:

'Edin Dzeko scored a late stunning goal to snatch a 1-0 win for Roma at Torino in their opening Serie A game on Sunday.',

image: 'https://res.cloudinary.com/tamas-demo/image/upload/pwa/dzeko.jpg',

added: new Date(

currentDateTime.getFullYear(),

currentDateTime.getMonth(),

currentDateTime.getDate(),

currentDateTime.getHours() - 1,

currentDateTime.getMinutes() - Math.floor(Math.random() * 4 + 1),

0

),

},

{

// ... etc

},

];

module.exports = {

news,

};Notice the

imageproperty references an image asset previously uploaded to Cloudinary.

The application

The application code itself. We start with a simple HTML page and some CSS:

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="utf-8" />

<title></title>

<meta name="description" content="" />

<meta name="author" content="Tamas Piros" />

<link rel="stylesheet" href="bootstrap.css" />

<link rel="stylesheet" href="app.css" />

<script src="app.js"></script>

</head>

<body>

<h1>Sports Time - Your favourite online sports magazine</h1>

<div class="container">

<div class="row">

<div class="card-deck"></div>

</div>

</div>

</body>

</html>.container {

padding: 1px;

}

body {

background-color: rgba(255, 255, 255, 0);

}

html {

background: url('https://res.cloudinary.com/tamas-demo/image/upload/pwa/background.jpg')

no-repeat center center fixed;

-webkit-background-size: cover;

-moz-background-size: cover;

-o-background-size: cover;

background-size: cover;

}

h1 {

background: yellow;

}The HTML references app.js. That file does two things: registers a service worker (more on that shortly) and uses the Fetch API to pull data from our API and inject the right elements into the HTML:

function generateCard(title, slug, added, img) {

// generates & constructs the card's HTML

}

fetch('/api/news')

.then((response) => response.json())

.then((news) => {

console.log(news);

news.forEach((n) => {

const today = new Date();

const added = new Date(n.added);

const difference = parseInt((today - added) / (1000 * 3600));

const card = generateCard(n.title, n.slug, difference, n.image);

const cardDeck = document.querySelector('.card-deck');

cardDeck.appendChild(card);

});

});Time to serve this and see how things look.

You may need to tweak your Express server to serve files from the right location via

app.use(express.static(${__dirname}/build));. Thebuildfolder referenced here is something we’ll use later.

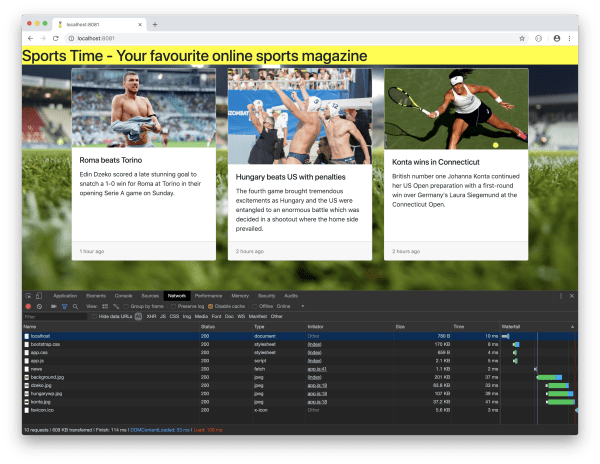

Ten requests, 609 KB transferred, 114 milliseconds (on my machine; your results will differ). Those ten requests are a mix of image fetches and API calls.

Taking the application offline at this point gives us the infamous “downasaur”.

I’d recommend Chrome for testing. Open DevTools, find the ‘Network’ tab, and tick the checkbox to bring the application offline.

We’ve got an application, but it’s not a PWA by any stretch. It collapses when offline. There are performance improvements we could make too. Let’s look at those.

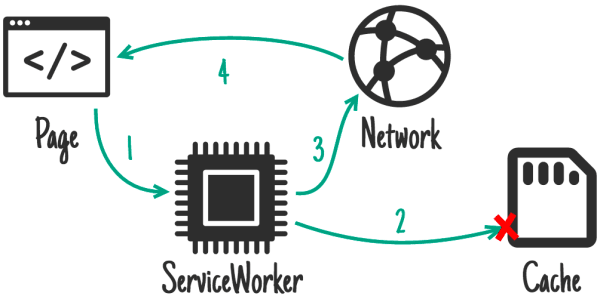

Service Workers

Think of the service worker as a proxy sitting between the browser and the application. Service Workers are written in JavaScript and can intercept the application’s normal request/response cycle. Here’s how to register one:

// app.js

if ('serviceWorker' in navigator) {

window.addEventListener('load', () => {

navigator.serviceWorker

.register('/sw.js')

.then((registration) => {

console.log(`Service Worker registered! Scope: ${registration.scope}`);

})

.catch((err) => {

console.log(`Service Worker registration failed: ${err}`);

});

});

}Once that’s wired up, we need to build out the Service Worker itself. The code above references sw.js, which will hold the Service Worker logic.

Workbox

Workbox is a set of JavaScript libraries from Google that bolt offline support onto web applications.

Workbox ships with solid boilerplate that strips back the pain of working with Service Workers. Out of the box, it handles precaching, request routing and various offline strategies.

You may have used or heard of

sw-precacheandsw-toolbox. Think of Workbox as those two tools welded together and enhanced.

Strategies

A quick demonstration of why Workbox helps. A common caching strategy for offline-first apps: if a resource is in the cache, return it from there; if not, fetch from the network.

The typical code looks like this:

self.addEventListener('fetch', (event) => {

event.respondWith(

caches.match(event.request).then((response) => {

return response || fetch(event.request);

})

);

});Now imagine we want that strategy for images only. The code gets gnarly fast.

With Workbox, we need this:

workbox.routing.registerRoute(

new RegExp('/images/'),

workbox.strategies.cacheFirst()

);Matches requests against the regex, applies cache-first. Much less noise. The cacheFirst() method can also accept an options object for further customisation.

So Workbox gives us boilerplate for working with Service Workers. The question: how do we actually use it?

The Service Worker file

Create sw.js and drop this in at the top:

importScripts(

'https://storage.googleapis.com/workbox-cdn/releases/3.6.1/workbox-sw.js'

);

if (workbox) {

console.log('Workbox is loaded');

} else {

console.log('Could not load Workbox');

}

importScripts()is a method we can use within the Service Worker to synchronously load scripts.

Refresh the application. The Workbox is loaded message should appear in the DevTools console.

Precaching

Next: pre-cache some static assets (things like index.html, our CSS and JS files).

Workbox handles this through pre-caching. Static assets get downloaded and cached before a Service Worker is installed. By the time the service worker activates, those files are already sitting in the cache.

Our application is small, but listing assets for precaching manually would be tedious. Workbox can generate the list automatically; we just provide patterns. There are three ways: CLI, Gulp, or Webpack. We’ll use Gulp.

Since we’re using Gulp, it makes sense to also build our application and drop it into a separate folder:

// Gulpfile.js

const gulp = require('gulp');

const del = require('del');

const workboxBuild = require('workbox-build');

const clean = () => del(['build/*'], { dot: true });

gulp.task('clean', clean);

const copy = () => gulp.src(['app/**/*']).pipe(gulp.dest('build'));

gulp.task('copy', copy);

const serviceWorker = () => {

return workboxBuild.injectManifest({

swSrc: 'app/sw.js',

swDest: 'build/sw.js',

globDirectory: 'build',

globPatterns: ['*.css', '*.css.map', 'index.html', 'app.js'],

});

};

gulp.task('service-worker', serviceWorker);

const build = gulp.series('clean', 'copy', 'service-worker');

gulp.task('build', build);

const watch = () => gulp.watch('app/**/*', build);

gulp.task('watch', watch);

gulp.task('default', build);Clean the dist folder, copy all files from the working folder (app), inject the static assets. Self-explanatory.

How does Workbox know where to inject these assets? We tell it, in sw.js:

workbox.precaching.precacheAndRoute([]);Run the gulp task and open dist/sw.js. A list of assets should be visible:

workbox.precaching.precacheAndRoute([

{

url: 'app.css',

revision: 'aaa3faaa6a5432c4d3c74c61368807ba',

},

// other assets ...

]);The revision tells Workbox whether an asset has changed and needs re-caching.

Run the application with the browser console open. Workbox logs the precached URLs.

Workbox stores the mapping between assets, revisions and full URLs in IndexedDB. Check your IndexedDB to see it. More on Workbox precaching here.

With some assets cached, let’s try the application offline. We see the <h1> element only. Better than nothing. Everything else fails because we depend on JavaScript to build the news cards, and that code fetches data from the API, which is unreachable offline (note the red error in the console).

An offline API

We need more to get a genuinely offline-capable application. The missing piece: API data. With a network connection, we can hit the API and pull data. Without one, we can’t. We need a mechanism to stash the API response and retrieve it when there’s no connection.

Workbox to the rescue. Remember those caching strategies? Let’s put one to work:

workbox.routing.registerRoute(

'/api/news',

workbox.strategies.staleWhileRevalidate({

cacheName: 'api-cache',

})

);We’re capturing requests to /api/news and applying the staleWhileRevalidate caching mechanism, with a named cache.

What does staleWhileRevalidate do? If cached data exists, return it immediately, but also fire off a fetch for fresh data and cache the response. Next time, we get the updated version.

Rerun gulp, refresh the application, then take it offline.

Because we have an active service worker, we need to clear it out. Easiest way: go to the ‘Applications’ tab in Chrome DevTools, find ‘Clear storage’, and hit ‘Clear site data’. This wipes active service workers and cached data.

The data now shows up even when offline. But the images are missing. Makes sense: they’re hosted on Cloudinary and need an HTTP call to fetch.

Caching images

We can cache images too and make them available offline, using the cache-first strategy we covered earlier:

workbox.routing.registerRoute(

new RegExp('^https://res.cloudinary.com'),

workbox.strategies.cacheFirst({

cacheName: 'cloudinary-images',

plugins: [

cloudinaryPlugin,

new workbox.expiration.Plugin({

maxEntries: 50,

purgeOnQuotaError: true,

}),

],

})

);This matches all requests to Cloudinary via a regular expression. We’re also creating a separate Workbox plugin for Cloudinary images. Beyond the cache name, we can configure how many entries the cache holds (maxEntries) and whether the cache can be purged if the app exceeds available storage (purgeOnQuotaError).

More on Workbox Strategies.

What does the cloudinaryPlugin do exactly? It checks whether the request is an image, then manipulates the Cloudinary URL before sending the request through. (The request is intercepted, so no image is served until the plugin finishes.) Once the final URL is assembled, the request fires with the modified URL:

const cloudinaryPlugin = {

requestWillFetch: async ({ request }) => {

if (/\.jpg$|.png$|.gif$|.webp$/.test(request.url)) {

let url = request.url.split('/');

let newPart;

let format = 'f_auto';

switch (

navigator && navigator.connection

? navigator.connection.effectiveType

: ''

) {

case '4g':

newPart = 'q_auto:good';

break;

case '3g':

newPart = 'q_auto:eco';

break;

case '2g':

case 'slow-2g':

newPart = 'q_auto:low';

break;

default:

newPart = 'q_auto:good';

break;

}

url.splice(url.length - 2, 0, `${newPart},${format}`);

const finalUrl = url.join('/');

const newUrl = new URL(finalUrl);

return new Request(newUrl.href, { headers: request.headers });

}

},

};The code above is covered in detail in the article Adaptive image loading based on network speed.

Time for a final test. Run gulp again, refresh the application, and take it offline.

The application now works offline. It loads data from the API, loads images, and applies manipulation to those images, which means the whole site loads faster. Earlier we had ten requests totalling 609 KB. Now we have 15 requests loading 164 KB of assets. (The extra requests come from the service worker.)

Try loading the application on a 3G connection too. Select ‘Slow 3G’ from DevTools under the Network tab. Total asset weight: only 6.7 KB.

Conclusion

We’ve built an application using Workbox.js that caches REST API responses and stores images in the browser’s cache. We also looked at how to store optimised images by creating a Cloudinary Workbox plugin.